On November 1, Microsoft officially launched its highly anticipated Copilot AI. This is an incredible tool for Microsoft customers. From Word to Teams, Outlook to Excel, Copilot can comb your internal data, interpret it, create deliverables, offer insights, and much more.

If that sounds a lot like ChatGPT, it is… and it isn’t.

In fact, it’s crucial to understand the differences between these tools if you’re going to make informed policy decisions for your organization. This is especially true if you’re not getting help from cyber security managed services.

Here’s everything you need to know about Microsoft Copilot vs. ChatGPT.

1. Copilot vs. ChatGPT: The basics

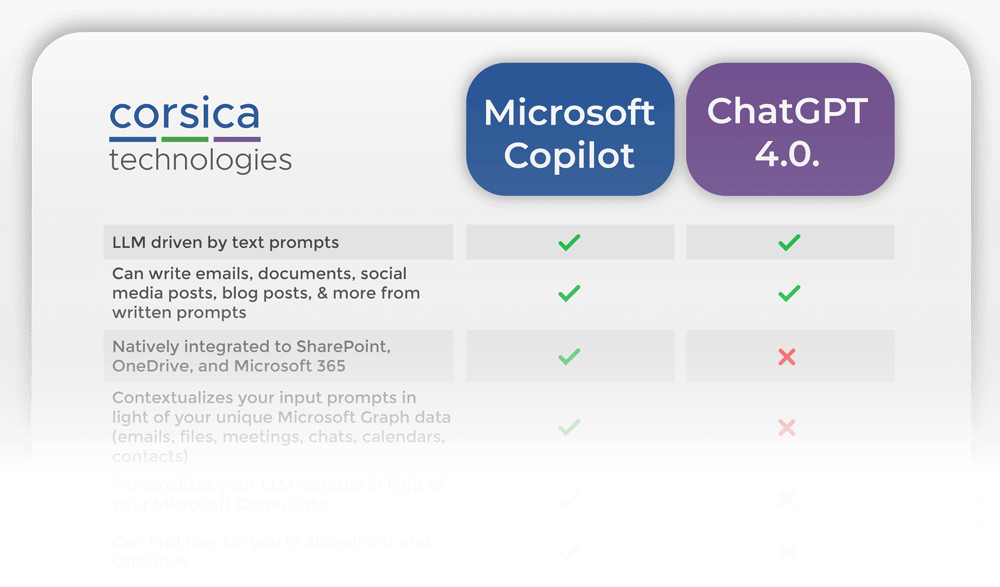

Copilot and ChatGPT are superficially similar in one regard. They’re both LLMs (large language models). This means they both operate on written prompts and produce written results.

However, that’s where the similarity ends. From datasets covered to use cases and more, these two AIs are vastly different.

In a nutshell, Microsoft has taken their AI engine and wrapped it around SharePoint, OneDrive, and the rest of Microsoft 365. The result works like a personal assistant that understands all proprietary organizational data to which it has access. It’s so good, you may want to help your personal assistant find a new career.

While ChatGPT can also function as a personal assistant, there’s one huge difference: ChatGPT doesn’t (or shouldn’t)have access to your organization’s internal data.

Let’s unpack that.

Want to compare these AIs side-by-side?

Download our FREE comparison chart.

2. Copilot and ChatGPT use wildly different datasets

ChatGPT’s answers reflect the dataset on which it’s been trained. By definition, none of your organization’s internal data makes it into that dataset—at least we hope it doesn’t.

In contrast, Copilot is fully integrated into your Microsoft 365 environment. It works within the sphere of your internal data, and it can give context-specific answers that apply to your operations. This has profound implications for use cases, security, and copyright considerations, which we’ll explore below.

3. Copilot beats ChatGPT for both internal and external operations

Copilot use cases

Microsoft Copilot has so many use cases, we can’t cover them all here. For a larger overview, check out this page from Microsoft. Click on every Microsoft application logo to see what Copilot can do in different contexts.

That said, here are a few Copilot use cases that offer low-hanging fruit for busy teams.

Drafting an important email or document. Like ChatGPT, Copilot can produce written communications for you. If it’s tough to wrap your mind around the main points you should cover, Copilot can write for you.

Finding files after consolidation in Sharepoint and OneDrive. For organizations that still use shared folders and mapped network drives, it’s stressful to imagine the move to cloud storage. People worry that they won’t be able to find their files. While it might sound crazy, your team actually won’t need to know where their files are. Copilot can find files with simple, natural-language prompts.

Getting the important points from a Teams chat. Let’s be honest, instant messaging has its place, but it can also bury important information as new things come up. Copilot can read chats for you, detect action items, and summarize what you need to know in a few bullets.

Summarizing an email chain (and what the team needs from you). Let’s say your boss sends you an email about the budget, referencing other email chains as well as Teams chats on the subject. Copilot can summarize the situation for you and explain in plain language what your boss is looking for.

Note that ChatGPT can’t access any of your organization’s proprietary data (unless someone makes a big mistake—more on that below). Secure use of ChatGPT puts a significant limit on what the AI can do to interpret internal data and advise on operations, whether internal or external.

That said, here are some common use cases for ChatGPT.

Producing written content, provided: 1) you’re not including sensitive information in the prompt, 2) you don’t mind the fact that other ChatGPT users could generate the same content that the AI is giving you, 3) you don’t mind the fact that you don’t own the copyright for the output content, and 4) someone else may already own the copyright for the output content.

Writing basic code. ChatGPT can help developers solve coding problems, although its output may not be optimized or even accurate. Also consider the fact that code can be copyrighted.

Generating customer service responses, including translation into different languages. ChatGPT excels at helping customer service agents respond to communications quickly while maintaining a positive, professional voice. The application can also translate written content to assist with cross-language customer support.

This list is by no means exhaustive. However, you can see the difference in the use cases. ChatGPT can’t (or shouldn’t)function as a personal assistant with access to your organization’s proprietary information. Microsoft Copilot is designed to work this way while protecting your information.

4. Copilot beats ChatGPT in terms of copyright implications

When an organization sources material from a human employee or contractor, the organization owns the copyright for that material, which falls into the category of work-for-hire.

Things get a little murkier when we look at AI-generated content.

ChatGPT copyright

When it comes to ChatGPT, there are two sides to the copyright question.

Can you copyright the output of ChatGPT? The answer is most likely no.

Can ChatGPT give you copyrighted material as an output, with no indication that it’s copyrighted? Potentially, since the AI has ingested copyrighted material. Note that OpenAI’s terms of service attempt to shift all risk of copyright violation back to the user.

Organizations using ChatGPT should do their due diligence to understand these implications—particularly if they’re using the AI for public-facing content for which they will claim copyright (for example, a blog post or website page). For internal communications like a quick email to a colleague, the risk of copyright violation may be less significant. However, other risks remain, and you should consult your legal counsel to get the full picture.

Microsoft Copilot copyright

Microsoft doesn’t claim any copyright for the output of Copilot. Whether organizations can claim copyright for the output of Copilot is unclear.

That said, Microsoft has committed to defend customers in court who are accused of copyright infringement for their use of work generated by Copilot. Of course, Microsoft provides stipulations and limitations for this commitment.

While this commitment isn’t a silver bullet, it’s more than anyone gets from OpenAI. Remember how OpenAI’s terms of service shift all responsibility for copyright compliance to the end user.

Need to define your AI policies?

Download our FREE template for generative AI policy.

5. Copilot and ChatGPT are tied on factual reliability

From listing non-existent books about President Lincoln to doing math wrong, ChatGPT is gaining a bit of a reputation. It’s not an all-knowing source of facts, but rather a stochastic text generator. It doesn’t “know” things in the way that a human being does.

The same is true of Copilot. As Microsoft explains:

“Copilot is designed to provide accurate and informative responses, based on the knowledge and your data available in the Microsoft Graph. However, answers may not always be accurate as they are generated based on patterns and probabilities in language data. Responses include references when possible, and it’s important to verify the information.”

In other words, whether you’re using ChatGPT or Copilot, you still need to fact-check anything coming out of the AI.

6. Copilot beats ChatGPT on cybersecurity

ChatGPT cybersecurity

Some sources advocate feeding proprietary information to ChatGPT so the chatbot can “inform employees using that company’s private data.” In theory, this would approximate the use of Copilot to deliver proprietary, context-specific information to employees. But it’s actually a terrible idea.

Why?

As TechTarget explains, “the publicly available version of ChatGPT uses [information entered in prompts] to learn and respond to future requests.”

In other words, you should assume you have no privacy whatsoever when you type a prompt into ChatGPT.

It’s one thing to explain this to your employees or include it in cybersecurity awareness training. But the fact is, an employee may choose to enter sensitive information anyway. This could be due to malicious intent, or to non-malicious intent coupled with a disregard for the rules.

Either way, the outcome is the same. Employees can expose your data if they type sensitive information into a ChatGPT prompt.

Microsoft Copilot cybersecurity

Here’s where Copilot really stands out. The security of your business data is baked right in. As Microsoft explains, “Copilot unlocks business value by connecting LLMs to your business data in a secure, compliant, privacy-preserving way.”

Furthermore—and this is the most important part—the article goes on to state:

“Microsoft doesn’t use customers’ data to train LLMs. We believe the customers’ data is their data, aligned to the Microsoft’s data privacy policy… Prompts, responses, and data accessed through Microsoft 365 Graph and Microsoft services aren’t used to train Copilot capabilities in Dynamics 365 and Power Platform for use by other customers. The foundation models aren’t improved through your usage. This means your data is accessible only by authorized users within your organization unless you explicitly consent to other access or use.”

In other words, Microsoft gets it. They respect your organization’s privacy, and your data isn’t going to leak out through LLM responses to other customers.

Here at Corsica Technologies, we love seeing this commitment. It gives us confidence when we recommend Copilot to customers. We know it won’t affect their cybersecurity posture—and improving that posture is one of our passions.

7. ChatGPT beats Copilot on cost

ChatGPT 4 costs $20 per month, while ChatGPT 3.5 remains free. You can read all about the differences between the two versions here.

Microsoft Copilot costs (or will cost) $30 per month, per user, for Microsoft 365 E3, E5, Business Standard, and Business Premium customers. Corsica Technologies clients can get Copilot as part of their M365 package.

Is Copilot worth it? That depends on the cost of the operational friction you’re experiencing today. Drop us a line if you want to talk through your challenges and learn more about the capabilities of Copilot.

Copilot vs. ChatGPT: The takeaway

For Microsoft customers, there’s really no contest. Copilot beats ChatGPT any day, on any task.

Why?

Because Copilot protects your organization’s proprietary data and offers context-specific results based on that data. You just can’t get that one-two punch from ChatGPT.

Here at Corsica Technologies, we’re excited to see where our customers go with Copilot. This is only the beginning, and it’s a great time to jump in and start optimizing your operations with AI. The key is to engage expert AI consulting services to maximize the value of AI at your organization. Reach out to us today, and let’s explore what Copilot can do for your business.